Cjklib 0.3 Release Announcement

Submitted by Christoph on 10 May, 2010 - 13:13New release of cjklib with dictionary support

We would like to announce the new 0.3 release of cjklib, a Python-based programming library providing higher-level support of Chinese characters, also called Han characters.

After adding lots of new features and fixing some bugs we believe that what is now version 0.3 is fit for public consumption. To name a few additions and changes:

- easy and powerful dictionary access to EDICT and CEDICT style dictionaries,

- support for MS Windows including exe installer,

- new documentation under cjklib.org [1],

- a new wiki for contributing language data,

- pronunciation data for Shanghainese, and finally

- broad coverage of stroke order data and corrections to component data.

See [2] for an extensive list of changes.

Several people contributed to the newest release:

- Uriah Eisenstein

- Hugo Lopez

- Hans-Jörg Happel

- Kellen Parker

- Allan Simon

- Christoph Burgmer

New dictionary support

An important resource for CJK languages is provided by bilingual dictionaries. With the newest release cjklib can now also offer easy access to EDICT, CEDICT and other compatible dictionaries. Exact searches in translations and wildcard searches in all columns provide a flexible and powerful way to query those sources. Mixing of characters and readings in a single request are also supported.

CharacterDB

A new project called CharacterDB [3] was started to gather data on characters for cjklib. It is based on MediaWiki, known from Wikipedia, and will offer easy access to the data employed in cjklib. While still in beta stage we plan to manage all glyph information through the wiki by the next release.

About cjklib

Cjklib tries to fill a current void in supporting Chinese characters by focusing on visual appearance and reading-based data. While many lexical sources already exists, there is no layer which provides the data in an accessible and consistent way, burdening the developer with reinventing many basic functions. This project wants to channel different efforts in order to provide the developer with a consistent view, independent of the chosen language. This library directly targets developers and experienced users, its overall goal being to improve the coverage of applications for the end user.

Cjklib is open source, released under the GNU LGPL. You are free to use this software and invited to take part in its further development.

If you wish to know more about cjklib then its website [1] is a good starting point. To have a quick overview on some of the functions offered you might want to look at [4].

Packages are readily available. See [5] on how to install.

The cjklib developers

cjklib-devel@googlegroups.com

[1] http://cjklib.org/

[2] http://cjklib.googlecode.com/svn/tags/release-0.3/changelog

[3] http://characterdb.cjklib.org/

[4] http://code.google.com/p/cjklib/wiki/Screenshots

[5] http://code.google.com/p/cjklib/wiki/QuickStart

Detecting Code-Switch Events Based on Textual Features

Submitted by Christoph on 29 April, 2010 - 17:58My thesis is finally printed and now also available as PDF. It is called Detecting Code-Switch Events Based on Textual Features and focuses on the detection of Code-Switching between English and Mandarin Chinese. I already found a typo, but I'd keep it with the Latin proverb, errare humanum est :)

Shanghainese syllable table (IPA)

Submitted by Christoph on 21 April, 2010 - 22:58I've already done a table on Pinyin for Mandarin,and Jyutping and Cantonese Yale for Cantonese. Here's now a table for Shanghainese. As there seems to be no accepted or standard romanisation for the Shanghai Wu dialect, IPA seems to be system at hand.

This table is based on the Wu Phonetics Corpus by Kellen Parker with contributions from Allan Simon.

| ɑ | ɑ̃ | ã | ɑˀ | ɛ | əˀ | ən | əl | ɿ | ɤ | i | iɑ | iɑ̃ | iɑˀ | iɤ | iɔ | iɪˀ | in | ioˀ | ioŋ | o | oˀ | oŋ | ø | ɔ | ɔˀ | u | uɑ̃ | uɑ | uɑˀ | uɛ | uəˀ | uən | y | yn | yø | yɪˀ | yəˀ | ||

| ɑ | ɑˀ | ɛ | i | iɑˀ | iɔ | iɪˀ | in | ioˀ | oˀ | ø | ɔ | uɛ | |||||||||||||||||||||||||||

| ɦ | ɦɑ | ɦɑ̃ | ɦɛ | ɦəˀ | ɦən | ɦəl | ɦɤ | ɦi | ɦiɑ | ɦiɑ̃ | ɦiɑˀ | ɦiɤ | ɦiɔ | ɦiɪˀ | ɦin | ɦioŋ | ɦo | ɦoˀ | ɦoŋ | ɦø | ɦɔ | ɦu | ɦuɑ̃ | ɦuɑ | ɦuɑˀ | ɦuɛ | ɦuəˀ | ɦuən | ɦy | ɦyn | ɦyø | ɦyɪˀ | |||||||

| h | hɑ | hɑ̃ | hɑˀ | hɛ | həˀ | hɤ | ho | hoˀ | hoŋ | hø | hɔ | hu | huɑ̃ | huɑ | huɑˀ | huɛ | huən | ||||||||||||||||||||||

| ŋ | ŋ | ŋɑ | ŋɑ̃ | ŋɛ | ŋəˀ | ŋɤ | ŋo | ŋoˀ | ŋø | ŋɔ | ŋu | ||||||||||||||||||||||||||||

| k | kɑ | kɑ̃ | kɛ | kəˀ | kən | kɤ | ko | koˀ | koŋ | kø | kɔ | ku | kuɑ̃ | kuɑ | kuɑˀ | kuɛ | kuəˀ | kuən | |||||||||||||||||||||

| kʰ | kʰɑ | kʰɑ̃ | kʰɑˀ | kʰɛ | kʰəˀ | kʰən | kʰɤ | kʰo | kʰoˀ | kʰoŋ | kʰø | kʰɔ | kʰu | kʰuɑ̃ | kʰuɑ | kʰuəˀ | kʰuən | ||||||||||||||||||||||

| g | gɑ | gɛ | gəˀ | gən | goˀ | goŋ | gɔ | gu | guɛ | ||||||||||||||||||||||||||||||

| ɲ | ɲi | ɲiɑ̃ | ɲiɤ | ɲiɔ | ɲiɪˀ | ɲin | ɲioŋ | ɲy | |||||||||||||||||||||||||||||||

| ʨ | ʨi | ʨiɑ | ʨiɑ̃ | ʨiɑˀ | ʨiɤ | ʨiɔ | ʨiɪˀ | ʨin | ʨioŋ | ʨy | ʨyn | ʨyəˀ | |||||||||||||||||||||||||||

| ʨʰ | ʨʰi | ʨʰiɑ | ʨʰiɑ̃ | ʨʰiɤ | ʨʰiɔ | ʨʰiɪˀ | ʨʰin | ʨʰy | ʨʰyəˀ | ||||||||||||||||||||||||||||||

| ʥ | ʥi | ʥiɑ | ʥiɑ̃ | ʥiɤ | ʥiɔ | ʥiɪˀ | ʥin | ʥioŋ | ʥy | ʥyn | ʥyəˀ | ||||||||||||||||||||||||||||

| ɕ | ɕi | ɕiɑ | ɕiɑ̃ | ɕiɤ | ɕiɔ | ɕiɪˀ | ɕin | ɕioŋ | ɕyn | ɕyəˀ | |||||||||||||||||||||||||||||

| ʑ | ʑi | ʑiɑ | ʑiɑ̃ | ʑiɤ | ʑin | ||||||||||||||||||||||||||||||||||

| m | m̩ | mɑ | mɑ̃ | mɑˀ | mɛ | məˀ | mən | mɤ | mi | miɔ | miɪˀ | min | mo | moˀ | moŋ | mø | mɔ | mu | |||||||||||||||||||||

| b | bɑ | bɑ̃ | bã | bɑˀ | bɛ | bən | bi | biɪˀ | bin | bo | boˀ | boŋ | bø | bɔ | bu | ||||||||||||||||||||||||

| p | pɑ | pɑ̃ | pɑˀ | pɛ | pən | pi | piɪˀ | pin | po | poˀ | pø | pɔ | pu | ||||||||||||||||||||||||||

| pʰ | pʰɑ | pʰɑ̃ | pʰɑˀ | pʰɛ | pʰən | pʰi | pʰiɪˀ | pʰin | pʰoˀ | pʰoŋ | pʰø | pʰɔ | pʰu | ||||||||||||||||||||||||||

| l | lɑ | lɑ̃ | lã | lɑˀ | lɛ | lən | lɤ | li | liɑ̃ | liɤ | liɔ | liɪˀ | lin | loˀ | loŋ | lø | lɔ | lɔˀ | lu | ||||||||||||||||||||

| n | nɑ | nɑ̃ | nɑˀ | nɛ | nən | no | noˀ | noŋ | nø | nɔ | nu | ||||||||||||||||||||||||||||

| t | tɑ | tɑ̃ | tɑˀ | tɛ | təˀ | tən | tɤ | ti | tiɑ | tiɤ | tiɔ | tiɪˀ | tin | toˀ | toŋ | tø | tɔ | tu | |||||||||||||||||||||

| tʰ | tʰɑ | tʰɑ̃ | tʰɑˀ | tʰɛ | tʰəˀ | tʰən | tʰɤ | tʰi | tʰiɔ | tʰiɪˀ | tʰin | tʰoˀ | tʰoŋ | tʰø | tʰɔ | tʰu | |||||||||||||||||||||||

| d | dɑ | dɑ̃ | dɑˀ | dɛ | dəˀ | dən | dɤ | di | diɔ | diɪˀ | din | doˀ | doŋ | dø | dɔ | du | |||||||||||||||||||||||

| ʦ | ʦɑ̃ | ʦɛ | ʦəˀ | ʦən | ʦɿ | ʦɤ | ʦo | ʦoŋ | ʦø | ʦɔ | ʦu | ||||||||||||||||||||||||||||

| ʦʰ | ʦʰɑ | ʦʰɑ̃ | ʦʰɛ | ʦʰəˀ | ʦʰən | ʦʰɿ | ʦʰɤ | ʦʰo | ʦʰoˀ | ʦʰoŋ | ʦʰø | ʦʰɔ | ʦʰu | ||||||||||||||||||||||||||

| s | sɑ | sɑ̃ | sɑˀ | sɛ | səˀ | sən | sɿ | sɤ | so | soŋ | sø | sɔ | su | ||||||||||||||||||||||||||

| z | zɑ | zɑ̃ | zã | zɑˀ | zɛ | zəˀ | zən | zɿ | zɤ | zo | zoŋ | zø | zɔ | zu | |||||||||||||||||||||||||

| f | fɑ̃ | fɑˀ | fɛ | fən | fɤ | fi | foˀ | foŋ | fu | ||||||||||||||||||||||||||||||

| v | vɑ | vɑ̃ | vɑˀ | vɛ | vəˀ | vən | vɤ | vi | voˀ | voŋ | vu |

There are surely some errors yet to be fixed. I'm not too happy with the ordering of initials and finals, but I can't work out a good order based on articulation characteristics.

Update:I removed some "duplicate" forms and regrouped initials and finals.

A technical post on transliterations

Submitted by Christoph on 17 March, 2010 - 14:32This blog entry provides a nice mix of transliterations, C++, cjklib, ICU and language bindings in Python.

ICU (International Components for Unicode) is an Unicode support Open Source library from IBM. I would call it the Unicode implementation. It is used by IBM, Google, Apple and others. SQLite which only offers narrow script support points to ICU for full Unicode support, the Python team decided to only implement basic Unicode support and leave locale based handling to ICU and PHP's intl library builds on top of ICU. ICU offers many functions aside from what one would expect from a library for an "encoding". Unicode though is far from only being an encoding, offering multilingual solutions together with the encoding of the world's scripts.

ICU is written in C/C++ and Java. A Python binding of the C++ implementation is offered via PyICU.

So, I already covered three of the five words above, let's see what's missing.

One feature I set my eyes upon recently is the Transliterations module of ICU. While the name is a bit of a misnomer, as it supports a wide range of text transformations, its initial task was to translate one script into another. As this is one of cjklib's strong points, I took a deeper look at it.

The Transliteration module implements an interface to a wide range of transformations and also offers on-the-fly transformations for keyboard input. I was twittering (actually i was denting) about a similar Google project recently and I'd be surprised if Google doesn't build on the support offered by ICU here.

You can try out a nice demo. For example use my backwards transformation called "Back". It's modeled after a script I wrote some time ago. Sadly it seems there's no transformation to reverse a string (which wouldn't make much sense for interactive mode as a processed string cannot be touched again). Just enter "Back" into the small text area above "Output 1" and enter lets say "no devil lived on" into the text area below "Input". When you click on "Transform" you should see the characters flipped: uo pǝʌıl lıʌǝp ou.

The beauty of the Transliterator interface is that combinations of transforms are made very easy. Transforms can be limited to certain scripts and the invers form can be automatically provided. For example NFD; [:Nonspacing Mark:] Remove; NFC "extracts" diacritical marks, applies the Remove transform to them and builds the remaining marks back onto the characters: Bēijǐng transforms to Beijing.

You can even find transliterations for Japanese, Korean and Chinese (Pinyin). The latter produces standard Pinyin from tones given by numbers (set aside wrong transformation for infrequent forms ng and hng). This is clearly a service that intersects with cjklib. But as ICU provides a standard interface for transliterations, why not register conversions implemented by cjklib to ICU?

No sooner said than done. PyICU just recently got support for the Transliterations interface, and as instantiation of the class in Python was not yet supported I got out my C++ foo (didn't really know I had any) and got the C++ layer to call a Python implementation. My changes (branch PythonTransliterator on github) already went into the main version halfway, making it available in standard installations.

There's a short example that registers any cjklib conversion with ICU and makes it available through the standard methods. I don't know if there is a reasonable combination of existing transforms from ICU and transliterations currently offered by cjklib. That could be the icing on the cake.

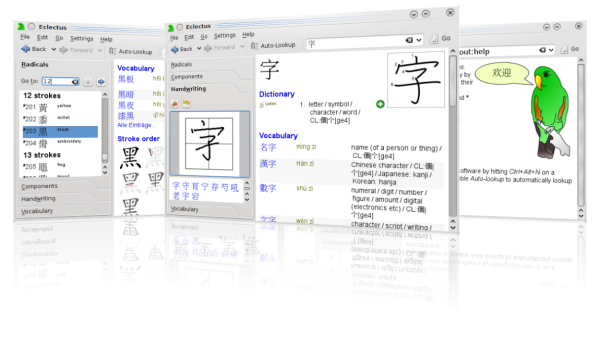

Eclectus screenshot (made with screenie)

Submitted by Christoph on 1 February, 2010 - 19:46

New screenshot for the google code page for Eclectus. Made using screenie.